Nobody's debating whether AI belongs in the military anymore. That ship sailed. AI is already embedded in logistics systems, training simulations, intelligence analysis, and even service delivery platforms that help soldiers find out where to get their car inspected on base.

The actual debate, the one worth paying attention to, is about governance. Who controls it? Who's accountable when it gets something wrong? And how do you build oversight into systems that operate faster than any human can review in real time?

The Governance Question Is the Only Question That Matters

OpenAI published an explanation of its agreement with the Department of Defense that reads like a careful exercise in boundary-setting. Strict guardrails. Legal compliance. Oversight structures. It's not a breathless press release about capability. It's a document about constraints. That's telling.

Meanwhile, military scholars at Army University Press are working through how AI reshapes strategic doctrine and introduces entirely new categories of risk. Not hypothetical risks. Structural ones, the kind that emerge when you plug machine-speed decision-making into institutions built around human judgment and chain of command.

Both perspectives land in the same place: AI in defense has to operate inside governance, not adjacent to it. Military institutions run on doctrine, accountability, and clearly defined authority. AI doesn't get a special exemption from that structure. It has to plug into it, with clear decision rights, documented oversight, traceable outputs, and human authority preserved at every consequential moment.

Here's the part people dance around: AI itself isn't destabilizing. Ungoverned AI is. Innovation inside structure creates lasting capability. Innovation outside structure creates volatility that erodes trust and, eventually, legitimacy.

The Anthropic-OpenAI Split: A Governance Stress Test in Real Time

If you want to understand why governance matters, look at what just happened between the Pentagon, Anthropic, and OpenAI. It's the clearest real-world illustration we've had so far.

Anthropic held a $200 million prototype agreement with the Department of Defense. But when the Pentagon requested that Anthropic remove restrictions preventing its AI from being used for mass domestic surveillance and fully autonomous weapons, Anthropic refused, stating it could not "in good conscience" accede to the request. The company drew a line: its models incorporate embedded guardrails, and it would not strip them out to give the military unrestricted access.

The fallout was swift. The administration designated Anthropic a Supply Chain Risk to National Security, and President Trump ordered federal agencies to stop using Anthropic's technology. Within hours, OpenAI announced its own deal with the Pentagon for classified deployment.

The two companies' approaches reveal a genuine philosophical split. Anthropic pursued a moral approach that won it many supporters but failed, while OpenAI pursued a pragmatic and legal approach that is ultimately softer on the Pentagon. Anthropic wanted explicit contractual prohibitions on specific use cases. OpenAI accepted the Pentagon's "all lawful uses" language but referenced existing laws as the constraining mechanism. Altman later admitted the company "shouldn't have rushed" to get the deal out but maintained OpenAI was trying to de-escalate the standoff.

OpenAI's published position outlined three areas where its models cannot be used: mass domestic surveillance, autonomous weapon systems, and high-stakes automated decisions like social credit scoring. The company says it maintains control over the safety rules governing its models and will embed its red lines into model behavior rather than relying solely on contract language. Critics have questioned whether that's enforceable in classified settings the company is entering for the first time.

This is a governance story, not a technology story. Both companies have powerful models. The divergence is about where the guardrails live and who gets to enforce them. Anthropic bet on hard contractual limits. OpenAI bet on legal frameworks and technical controls. Neither approach is obviously wrong, but they carry very different risk profiles. And the consequences of getting it wrong are not theoretical.

Governance Is Not Surveillance (and Conflating Them Is Dangerous)

This is the part of the conversation that keeps getting muddled, so let's be direct: governance and surveillance are not the same thing. In fact, governance is the mechanism designed to prevent surveillance overreach.

When we talk about AI governance in military contexts, we're talking about audit trails, accountability chains, defined scope, and human authority over consequential decisions. We're talking about the ability to reconstruct why a system did what it did, and who approved it. That's oversight. It protects both mission integrity and civil liberties.

Surveillance, particularly mass domestic surveillance, is the absence of those constraints. It's what happens when powerful tools operate without boundaries, without defined scope, and without meaningful human review. The entire reason Anthropic's standoff with the Pentagon became national news is that the company recognized this distinction and refused to blur it.

This matters for every organization working with AI in government contexts, not just defense. When agencies deploy AI-enhanced search, predictive analytics, or content management tools, governance is what ensures those tools serve their intended purpose and nothing more. Scope limitations, data boundaries, access controls, and audit logging aren't bureaucratic overhead. They're the architecture that keeps useful AI tools from becoming instruments of overreach.

The temptation, especially in national security contexts, is to treat governance as friction that slows down capability. But the opposite is true. Governance is what makes AI deployment sustainable because it preserves the legitimacy that public institutions depend on. Strip out the guardrails and you might move faster in the short term, but you'll erode the public trust that makes long-term adoption possible.

The Risks Are Real and They're Not Hypothetical

Let's walk through the hard stuff, because glossing over it is how you end up with bad policy.

Escalation risk is probably the scariest. AI operates at machine speed. In cyber operations or autonomous contexts, decision timelines compress from hours to seconds. That sounds like an advantage until you realize that faster responses can unintentionally escalate a conflict before anyone with stars on their shoulders has a chance to weigh in.

Accountability gaps follow close behind. If an AI-assisted system shapes targeting analysis or intelligence triage, who owns the outcome when it's wrong? The answer has to be unambiguous: the system assists, the commander decides, and the chain of accountability stays human. Without that clarity, you get organizational paralysis at best and eroded legitimacy at worst.

Bias and data integrity are quieter problems but no less dangerous. AI learns from historical data. If that data is incomplete, skewed, or just old, the outputs inherit those flaws. In a military context, a flawed output isn't a bad product recommendation. It can have strategic consequences.

Cyber vulnerabilities deserve their own category. AI systems can be attacked through adversarial manipulation or data poisoning. Any defense AI deployment has to be hardened, red-teamed, and continuously monitored. This isn't a one-time certification. It's ongoing.

Ethical drift might be the most insidious risk of all. It happens gradually. Teams start trusting the AI's recommendations a little more, scrutinizing them a little less. Over time, human oversight becomes performative rather than substantive. Governance frameworks exist specifically to prevent this slow slide.

None of these are theoretical concerns. They're governance challenges with known mitigations, if you actually implement them.

What Military AI Actually Looks Like Day to Day

Here's where the conversation usually gets distorted, because popular imagination jumps straight to autonomous weapons. The reality is a lot more mundane and, frankly, more interesting.

Adaptive training simulations are one of the most impactful applications. AI-driven war games dynamically adjust scenarios based on what the trainee actually does, creating responsive environments that static exercises can't match. Trainees face unpredictable situations that force genuine decision-making rather than pattern matching against a script.

Predictive readiness might sound boring, but it's transformative. AI models analyze maintenance logs, logistics data, and equipment telemetry to forecast when something is about to fail. Keeping aircraft flying and vehicles rolling through predictive maintenance beats reactive scrambling every time.

Intelligence and language training uses AI to support analytical reasoning and language acquisition through scenario-based tools. It's structured, measurable, and low-risk, exactly the kind of environment where AI can improve performance without introducing battlefield consequences.

Training environments are the sweet spot for military AI adoption. They provide controlled spaces to prove value, build institutional trust, and work out governance kinks before anything touches an operational context.

Digital.gov outlines broader innovation principles for government that align well here: innovation must connect to mission delivery and accountability, not just technical novelty.

A Practical Example: AI-Enhanced Search for Army MWR

Not every military AI project involves satellites or cyber defense. Some of them are about helping a soldier's spouse figure out which base has childcare openings.

The U.S. Army's Morale, Welfare, and Recreation (MWR) program manages a massive digital ecosystem: hundreds of installations, thousands of pages, and users who need installation-specific information fast. The problem was classic content management at scale. Relevant content existed, but people couldn't find it.

An AI-enhanced search implementation tackled this through intent recognition, improved metadata alignment, installation-aware filtering, and faster retrieval. Content discovery and relevance improved significantly across the ecosystem.

What made this project work wasn't just the technology. It was the governance. Defined scope. Clear KPIs. Human oversight. A focus on service delivery rather than experimentation for its own sake.

This is the pattern that actually scales in military and government contexts: enhancement with guardrails. If you're interested in what that looks like at the platform level, we've written about AI use cases for government websites that actually scale and how to build a safe AI use policy for federal agencies.

The Benefits Are Genuine, With a Caveat

AI adoption in defense keeps accelerating because it delivers measurable value. Speed of analysis is the obvious win. AI processes satellite imagery, signals intelligence, and logistics datasets orders of magnitude faster than manual workflows. Predictive maintenance reduces aircraft downtime. Machine learning detects anomalies across defense networks that human analysts would miss. AI-enabled systems can reduce personnel exposure in hazardous environments.

But here's the caveat that doesn't show up in vendor pitches: benefits remain benefits only when governance stays central. An AI system that speeds up analysis by 10x but operates outside auditable oversight is a liability dressed up as an asset.

Ethics as Architecture, Not Afterthought

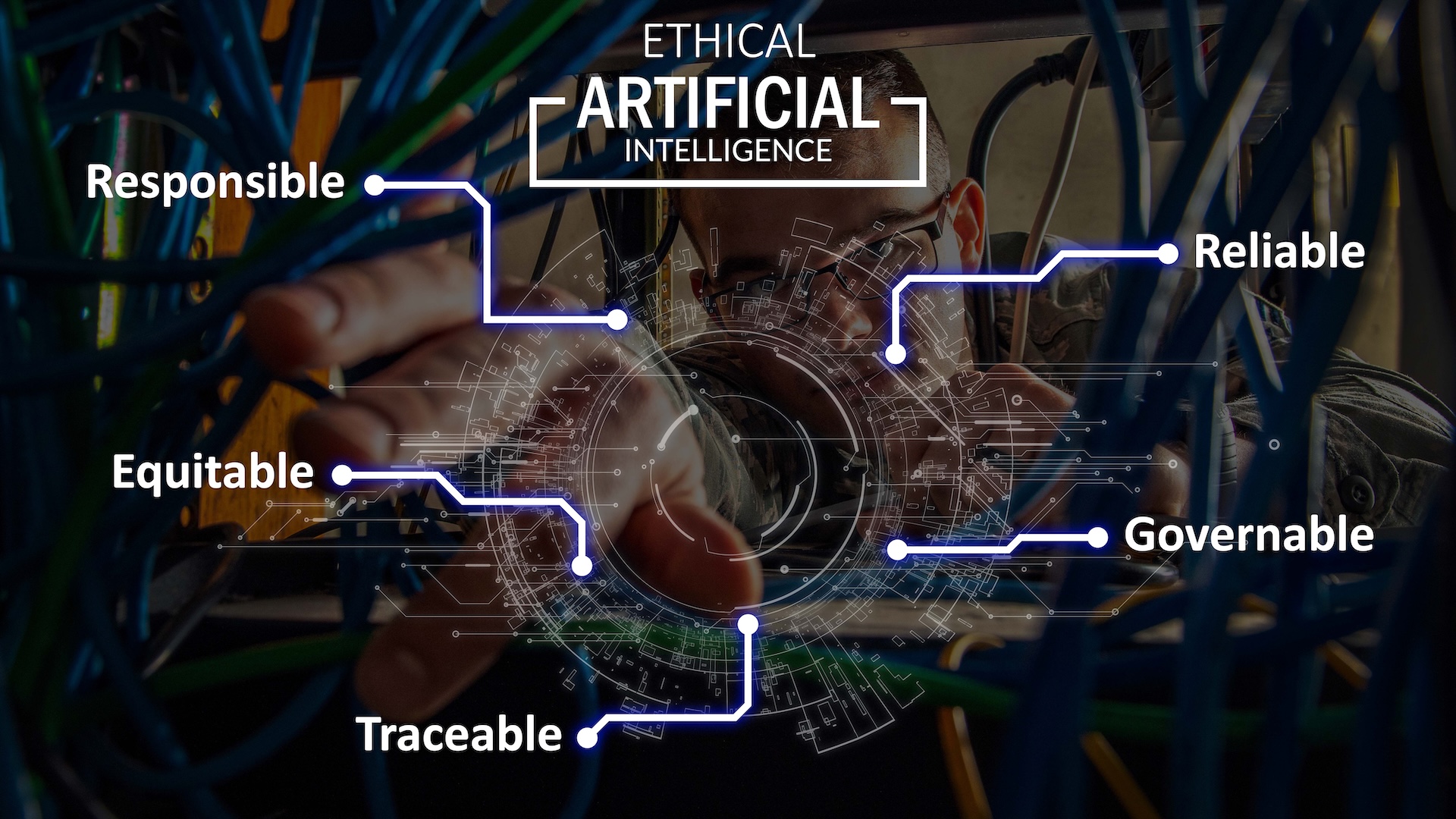

The Department of Defense formally adopted five AI ethical principles: responsibility, equitability, traceability, reliability, and governability. Those are good words. What matters is whether they get operationalized.

Responsibility means humans stay accountable, not in a vague "someone's in charge" sense, but with named individuals who own outcomes. Traceability means decisions can be reconstructed and audited after the fact. Governability means systems can be controlled, overridden, and shut down when needed.

In practice, this looks like human-in-the-loop controls where AI recommends but humans approve consequential actions. It looks like AI review boards (cross-functional committees that evaluate systems before they go operational). It looks like audit logging that captures inputs, outputs, and model versions. Controlled data environments. Red team testing that actively hunts for bias and adversarial weaknesses. Override protocols that include manual shutdown capability.

These aren't theoretical safeguards. They're the mechanisms that transform AI from a risk amplifier into a force multiplier. Without them, you have clever technology. With them, you have capability.

What's Coming Next

AI will continue reshaping military operations along several axes. Decision timelines will keep compressing as faster data processing accelerates operational cycles. Situational awareness will improve as data fusion and analytics enhance what commanders can actually see and understand. Military doctrine will have to evolve continuously to keep pace, because the ethical frameworks that work today may need revision as capabilities change. And strategic competition between nations will increasingly hinge on who can integrate AI effectively into logistics, cyber operations, and intelligence.

The trajectory of all of this (whether it trends toward stability or chaos) depends almost entirely on governance discipline. The technology will keep advancing regardless. The question is whether the institutional structures around it advance at the same pace.

The Bottom Line

AI in the military isn't inherently destabilizing. Deploying it without structure is. When AI operates inside doctrine, inside accountability chains, and inside ethical frameworks, it strengthens mission capability. When it operates outside those structures, it erodes the legitimacy that military institutions depend on.

Sources

- Osborne, J. (2020, February 5). Marines with Marine Corps Forces Cyberspace Command observe computer screens at a cyber operations center at Fort Meade, Maryland [Photograph]. Defense Visual Information Distribution Service (DVIDS).

- U.S. Department of Defense. (2020, February 25). The Defense Department officially adopted five principles for ethical artificial intelligence [Graphic]. Defense Media Activity. View image.