Although Google never confirms them all in detail, it’s estimated that there are over 200 SEO ranking factors. From content to backlinks and site speed to mobile optimization, SEO marketers have a myriad of facets to take into account.

As Google has grown cleverer in recent years, so has its algorithm. And one factor many SEOs are starting to consider more closely when optimizing sites is the importance that Google places on user security.

Cyber SEO factors are less direct than the likes of quality content or backlinks, but are just as vital when it comes to providing a great user experience. Poor security measures affect SEO in their own way, by obstructing or skewing your efforts to create a site in line with Google’s guidelines.

Read on as we look at the ultimate cost that cyber SEO factors can have on a site’s ranking, before breaking this down into nine prominent examples.

Blacklisting: The ultimate cost

Poor management of your cyber SEO can ultimately lead to the ultimate Google penalty—a blacklisted site. This simply means that you’re no longer allowed to compete for spots on Google’s search result pages.

Given that over 50% of users find a site through organic search, and Google has 92% of the market share, this drastically reduces the chances of anyone ever finding you by searching online.

Algorithmic penalties

As we know, Google uses its algorithm to judge the quality of a website. If your website fails to match up to its expectations of what a good site entails, you’ll drop down the rankings.

Some of Google’s more ground-breaking algorithm updates include Penguin, which saw sites with dodgy link profiles get a thorough spanking, or Panda, which demonized thin content.

Now, as a webmaster, you may have a delightful content and link strategy that’s perfectly in-line with Google’s algorithm. But sites with poor cyber SEO are open to hacking, and hackers may be damaging all of your hard work.

The value of a more in-depth SEO strategy is clear here. Instead of trying to handle the traditional audit/content creation/link-earning circus yourself, a consulting organization (such as enterprise SEO services) could help you deploy various optimization strategies—including keeping a clear eye on cyber SEO.

Manual penalties

You’ll be notified of a manual penalty by Google through your Search Console account. Instead of the algorithm being the judge, a Google representative will have discovered something on your site that’s against their guidelines.

There’s a step-by-step process to getting a manual penalty removed, but it can be a time-consuming one. In the meantime, your site will be blacklisted from ranking.

The full list of reasons for penalties can be found on Google’s own manual actions page, and this includes a host of site issues which can be caused by hackers—all because of poor website security.

9 ways that website security affects SEO

-

No SSL certificate means less users

Let’s start with the most obvious of all cyber SEO issues. Google actually confirmed that HTTPS is an SEO ranking factor back in 2014. Having an SSL (secure sockets layer) certificate adds better encryption, covering the handshake between the client-side browser and the server.

As a result, monetary transactions are made secure, and so more likely. Knowledgeable customers nowadays won’t stay on a website that doesn’t offer HTTPS security. This won’t just mean a drop in traffic for websites stuck on HTTP, but high bounce rates as well.

High bounce rates may not be a direct SEO ranking factor, but they certainly have indirect consequences. If a high number of users are landing on a website and then immediately clicking off it, it can send signals to Google’s algorithm that the page in question isn’t relevant to the initial search query. And this will impact your rankings, as Google seeks to show ever-more relevant results.

-

Outdated software increases vulnerability

Most of us have ignored an “Update Now” flash from the platform we’re running, especially if we’re right in the middle of a highly-urgent project. But doing so can be disastrous.

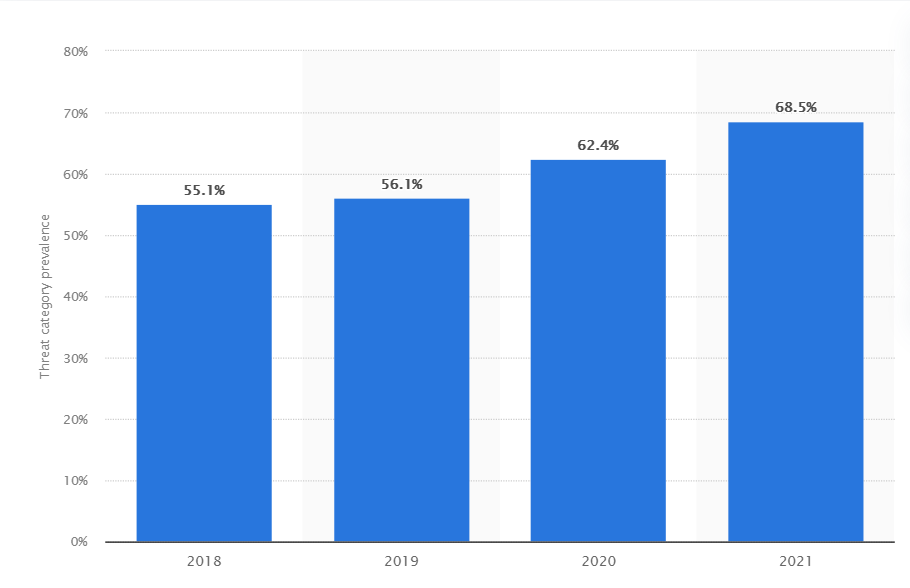

Everything from your CMS system to your operational platform, security software, and plug-ins will require an upgrade from time to time. Don’t put it off. As we can see from the chart below, cyber attacks have enjoyed a glorious rise in recent years.

Percentage of organizations victimized by ransomware attacks

If your site is hacked through vulnerable software, everything recorded on it is fair game for the beast behind the keyboard. And they’ll have more important concerns than trying to work within Google’s SEO algorithms.

-

Spamming can result in penalties

Spamming is one of the most popular tactics a hacker may utilize, after they’ve found a vulnerability through outdated software.

Spamming usually whacks a pile of content and links onto a user’s website. A hacker may even replace your existing content with their own. The links will redirect to pages unassociated with your own site, and so go against Google’s guidelines. Penguin penalty, anyone?

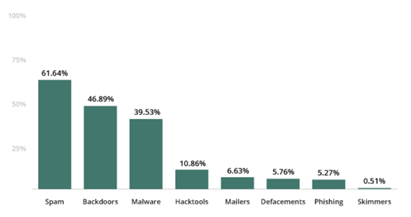

To put the scale of this problem into context, security platform Securi reported that almost 62% of their client sites contained SEO spam in a 2019 study, making it the most common of all cyber SEO factors.

Why would hackers do this? Well, it’s merely a way of sending spam content to people. They need to hijack a legitimate website to do this, as far fewer people would visit a worthless-looking one.

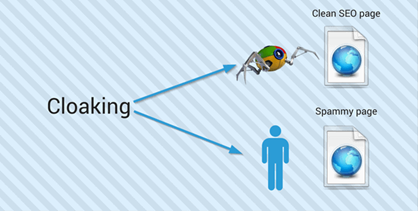

Many will also use a process of “cloaking” to hide their handiwork. Users see the spam version of the page, but Google’s crawlers see the original. Google will notice eventually, and a penalty will come your way. Webmasters need to keep a very close eye on their content.

-

Deleted content

On top of spamming, hackers may simply delete the files from your content management system (CMS), leading to a host of 404 pages. Google understands that the odd 404 page is inevitable on a website, but an excess of these will harm your SEO.

All the work your content team has put into creating pillar pages and topic clusters can be gone overnight. Your site can quickly go from being an expert authority to one that’s failing to provide content to match users’ search intent.

-

Losing user trust

Word can get out that the security of a website has been compromised. For larger brands, it may even make the national press. And if a breach includes user data, it can be a gargantuan task to rebuild confidence amongst your customer base.

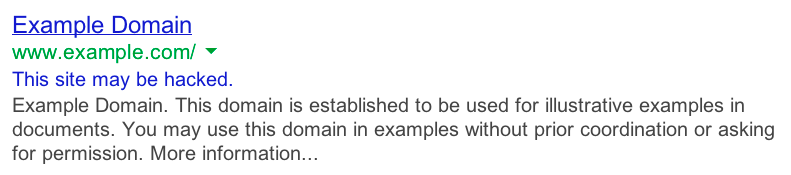

If Google discovers a breach, you may see warnings like this in the SERPs:

Bad memories linger. Even when you’ve sorted out the problem, adopted all the latest security and compliance measures, built a new site, and have the most sparkling SEO profile, you can’t survive without users. And a lack of clicks will mean a drop in ranking.

-

Duplicate content

A large amount of duplicate content is bad for SEO, and this is usually done by site scrapers, following a security breach. Before you’re even aware that your site has been replicated elsewhere, Google could hit you with an SEO penalty, believing you to be the one doing all the duplicating.

There are methods you can use to make it clear that you’re the original creator of the content. These range from adding copyrights to verifying your content by identifying the author in the page’s html code. But the safest method of all is to keep better checks on your cyber SEO and ensure no one hacks your site in the first place.

-

Distorted analytics make for bad SEO strategies

So, we’ve seen how poor security can affect various SEO ranking factors. It can also directly affect your SEO strategies by playing havoc with your data.

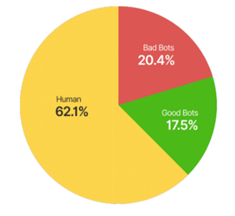

Crawlers with less-than-moral intentions are thought to generate over 20% of web traffic; a frightening thought.

If your traffic figures are being greatly inflated by dodgy crawlers, you could be creating SEO strategies based on false figures. Never a good idea in a data-led universe.

-

Server overload can block Google bots

Too many dodgy bots crawling your site can affect your server capacity. Having your capacity clogged up by unwanted guests will slow your website down—an SEO ranking factor in itself. It may also stop Google’s bots from successfully crawling your site. And an improper crawl means improper rankings.

It’s possible to block specific bots from your website, or to stop all bots from accessing certain parts of the site to reduce the strain on the server. And if it’s a search engine’s own bots that are causing overload, you can usually adjust settings to limit the crawl rate.

-

Downtime: No site, no rankings

Finally, a range of cyber attacks can see your site go down, for minutes, days, or sometimes even weeks. If users can’t access your site, they’ll stop trying to.

Google will return to a site that it’s unable to crawl, but if you’ve been subjected to the likes of ransomware, you’ve a problem that can take a long time to fix, meaning a lot of downtime. And this in turn will impact your SERP rankings.

Conclusion

Most webmasters will already be taking security seriously, purely as an anti-hacking measure. They may not realize the direct consequences a breach can have on their organic search potential, nor the long-term implications of this.

It’s essential to judge cybersecurity from an enterprise SEO perspective—one with an eye on the bigger picture. Knowing what to look for with SEO in mind will add a further layer of security to your existing tests, updates, and processes.

And with any luck, you’ll be able to spot signs of a breach before Google does.

Bio:

Nick Brown - accelerate agency